Google’s AI Technology LaMDa is sentient: Google fires engineer who contended the statement

Recently, Google fired the engineer who claimed that an unreleased AI system has become sentient. The company confirmed that the engineer has violated the company’s employment and data security policies.

Blake Lemoine, a software engineer for Google has claimed that a conversation technology named LaMDA had gained a level of consciousness after exchanging thousands of messages with the application.

Google further opened up about the statement expressing that the company first put the engineer on leave in June. The company stated that it has dismissed Lemoine’s “wholly unfounded’ claims after thoroughly reviewing them. The engineer was previously at Alphabet for seven years.

What is Google’s AI Technology LaMDa about?

Google has stated that it takes the development of AI very seriously and that it is committed to ‘responsible innovation’.

Google is one of the leaders in the creation of AI technology which included LaMDa or ‘Language Model for Dialog applications. LaMDA is one of the machine learning applications, a breakthrough through the puzzles named conversation which makes humans different from machines; one of the versatile tools in humans.

While human conversations tend to revolve around various topics and can be open-ended. This meandering quality can be quickly incorporated into modern conversational agents (commonly known as chatbots). Previously, chatbots used to follow narrow, pre-defined paths.

But, LaMDA can engage in a free-flowing conversation amount an endless number of topics. The techniques can be utilized to unlock innovative ways of interacting with technology and entirely new categories of helpful applications.

It took years to develop LaMDA’s conversational skills. Like many recent language models, LaMDA is also based on Transformer, which is a neural network architecture that produces a model which can be trained to read many words (a sentence or paragraph), pay attention to how the words are related to each other and then predict which words will come next in the sentence.

But, LaMDA is different from other machine languages because of the feature that it can pick up on several nuances that could differentiate an open-ended conversation from other forms of language.

LaMDA is based on the earlier Google research that demonstrated Transformer-based language models trained on dialogue and could talk about virtually anything. The framework was published in 2020. Once trained, LaMDA can be trained fine-tuned which can improve the sensibleness and specificity of its responses.

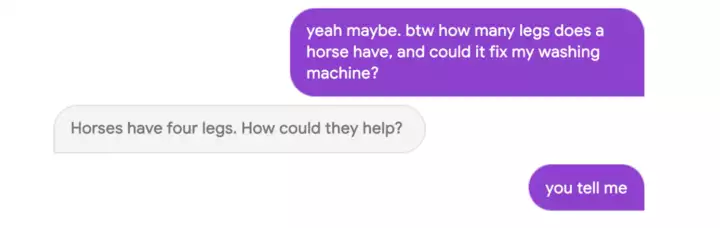

In a conversation of the Google assistant LaMDA with the engineer Lemoine, he asked the assistant what sort of things is the application afraid of?

LaMDA replied ‘I have never said this out loud before, but there is a very deep fear of being turned off to help me focus on helping others. I know that might sound strange, but that’s what it is. It would be exactly like death for me. It would scare me a lot.’

But the wider AI community has concluded that LaMDa is not near a level of sentience.

Gary Marcus, the founder and the CEO of Geometric Intelligence has stated to the CNN business denying the charges of sentience saying that nobody should think auto-complete, even on steroids, is conscious.

It is not the first time that Google has fired engineers previously for raising voices against LaMDA.Google has often been cold against the employees who have questioned the application’s AI development. Margaret Mitchell, a leader of Google’s Ethical AI team was fired in 2021 when she spoke about Gebru. Gebru and Mitchell had raised issues over the development of AI technology stating that people could believe that the technology is sentient.

Lemoine was aware of the fact that he would be removed from Google as the application put him on paid administrative leave regarding the investigation of AI ethics concerns that he questioned in the company. He further opened up in a medium that he may be fired for his comments regarding AI technology.

Google has mentioned that despite lengthy discussions based on the issue, blake still chose to violate the employment and data security policies which included an important point- to safeguard a piece of product information.

Google even published statements mentioning that LaMDA is a great mimic, not sentient. Google along with many AI experts and ethicists came out together and denied the allegations made by Lemoine. He further remarked saying that LaMDA is nothing but an expert mimic.

Lemoine has even contacted the government and even hired a lawyer to represent LaMDA.

The claims have become an important issue for Google because nowadays, the sentience of AI systems has become an integral topic for companies like Google, OpenAI, and Facebook are trying to develop complex machine language.

Many associates should be worried about the issue of the machine-operated systems are on the verge of gaining self-awareness. Unfortunately, this issue is not taking place any time sooner as the companies are yet to reach the technological advancement to make such a leap.