Following objections from privacy institutions and industry rivals, Apple has postponed the launch of its new application that would detect photographs depicting child abuse on iPhones. The program was announced last month and was scheduled for launch in the US later this year.

What is the new software, and how would it have worked?

Apple last month announced it would roll out a two-pronged tool that scans photographs on its devices to monitor for content that could be categorized as Child Sexual Abuse Material (CSAM).

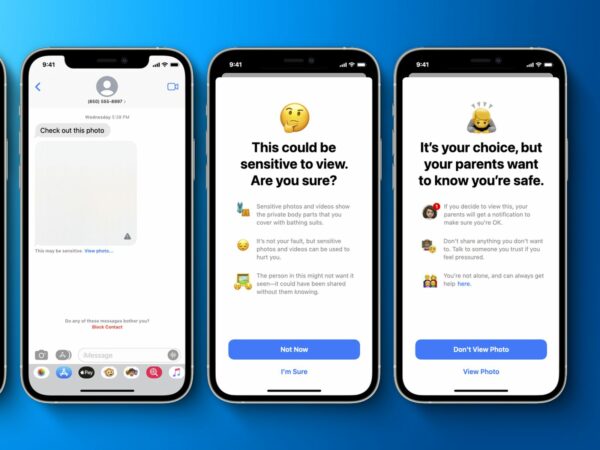

As part of the mechanism, Apple’s software neuralMatch would review for photos before they are uploaded to iCloud, its cloud storage service, and examine the content of messages sent on its end-to-end encrypted iMessage app. “The Messages app will use on-device machine knowledge to inform about sensitive content while retaining private communications unreadable by Apple,” the company had clarified.

Apple’s neuralMatch analyzes the photos with a database of child abuse imagery, and when there is a suspicion, Apple’s staff will manually check the photos. Once the photos are confirmed for child abuse, the National Center for Missing and Exploited Children (NCMEC) in the US will be informed.

About CSAM detection

Lately, a critical concern is the widespread of Child Sexual Abuse Material (CSAM) on the internet. CSAM addresses to content that depicts sexually explicit activities that involves children.

To help address this issue, new technology in iOS and iPadOS will enable Apple to detect known CSAM pictures stored in iCloud Photos. It will allow Apple to report these cases to the National Center for Missing and Exploited Children (NCMEC). NCMEC acts as a complete reporting center for CSAM and works adjacently with law enforcement agencies across the United States.

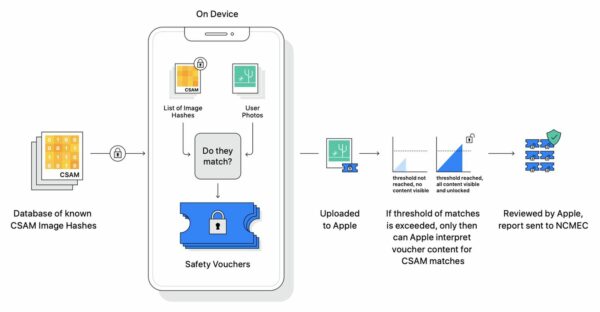

Apple’s technique of identifying known CSAM is created with user privacy in account. In place of scanning images in the cloud, the system works on-device matching using a database of available CSAM image mixtures provided by NCMEC and other child safety organizations.

Apple further converts this database into an unreadable set of combinations that is safely stored on users’ iPhone or iPad.

Before a photo is stored in iCloud Photos, an on-device matching process is done for that image against the known CSAM photos. This comparing process is backed by a cryptographic technology, called as private set intersection.

This technology determines if there is a match without revealing the result. The software creates a cryptographic safety voucher that encodes the match result and additional encrypted data about the image. This certification is uploaded to iCloud Photos along with the image.

By using another technology called threshold secret sharing, the system guarantees Apple cannot read the contents of the safety vouchers unless the iCloud Photos account meets a threshold of available CSAM content. The threshold is set to give an extremely high level of precision and assures less than a one in one trillion chance every year of mistakenly flagging a given account.

Only when the threshold is exceeded does the cryptographic technology allow Apple to interpret the contents of the safety vouchers associated with the matching CSAM images.

Apple then manually reviews each report to confirm there is a match, disables the user’s account, and sends a notification to NCMEC. If users feel their account has been falsely signaled, they can appeal to restore their account.

This innovative new technology permits Apple to provide valuable and actionable data to NCMEC and law enforcement regarding the generation of known CSAM.

And it does so while delivering significant privacy gains over existing methods since Apple only learns about users’ photos if they have a compilation of known CSAM in their iCloud Photos account. Even in these cases, Apple only retains images that match known CSAM.

What were the concerns raised?

It has only been a month when powerful economies such as India and Israel have shaken the world with their Pegasus scandal. Powerful countries like India and the US have time and again violated their citizens’ privacy.

With the progress in technology, people have become more vulnerable to the control-freak governments globally. In such time, Apple’s announcement of such software has raised concerns. Also, some social media apps have altered their privacy rights, which has already made people question the motifs of their government.

It is also a fact that child pornography and child sexual abuse have increased with time. Several governments have established stringent laws against child sexual abuse, but such efforts are not enough.

In the present technologically advanced world, it has become challenging to curb such crimes. Hence, for some, such technology is the need of the hour.

While child protection agencies are embracing the move, agencies of digital privacy, and industry rivals, are raising protests implying that the technology could have broad-based implications on user privacy. It is considered that it’s nearly impossible to build a client-side scanning system solely for sexually explicit photos sent or received by children without such software being used for other purposes.

The report had put the spotlight once again on administrations and law enforcement institutions seeking a way to enter into encrypted services. Will Cathcart, the chief of end-to-end encrypted popular messaging service WhatsApp, had mentioned: “This is an Apple-made and managed surveillance system that could very easily be used to scan private content for anything they or a government decides it wants to control. Countries, where iPhones are sold, will have different definitions on what is acceptable”.

Why has Apple been restricted?

In a press release, Apple said it would take a longer time to collect feedback and develop proposed child safety features after the criticism of the system on privacy and other areas both outside and inside the firm. “Based on feedback from customers, researchers, advocacy groups, and others, Apple have decided to take more time over the upcoming months to collect input and make improvements before releasing these critically important child safety features,” it declared.

According to Reuters, Apple has been struggling to establish its point for weeks. They had already offered a series of explanations and documents to show that the risks of false detections were low.